Exploring the Reddit JSON interface

Reddit's official API may be out of reach for most, but an unofficial alternative still works

Back in summer 2023, Reddit imposed significant restrictions and massive costs on access to the platform’s previously free REST API, significantly curtailing the ability of independent researchers to study activity on the site. However, Reddit does still have a free API of sorts, in the form of JSON versions of most elements of the user interface. This article describes how to use this unofficial “API” to gather various forms of data from Reddit programmatically, including top-level posts, comments, and user profile information.

For almost any given Reddit URL that displays a human-readable page, there is a corresponding JSON URL that returns the same information in JSON format. While there is some inconsistency in the specifics, the JSON URLs are generally formed by adding .json to the standard URL, along with optional parameters regarding the maximum number of results to retrieve and how to sort them.

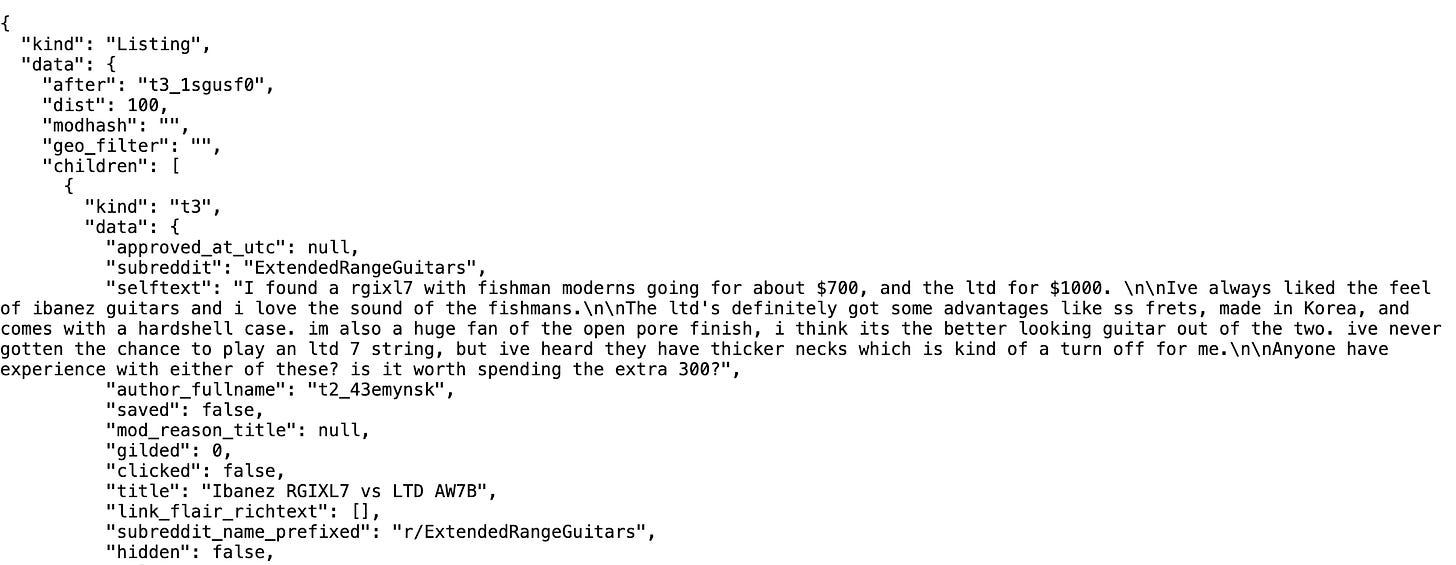

Below is an example of how this works for listing the posts on a given subreddit (specifically, /r/ExtendedRangeGuitars) in most-recent-first order. The first URL is the standard URL used by human users of the Reddit website; the second returns the same list of posts in JSON form.

https://www.reddit.com/r/ExtendedRangeGuitars/new

https://www.reddit.com/r/ExtendedRangeGuitars/new.json?sort=new&raw_json=1&limit=100

This process can be easily automated using Python (or any other popular programming language, for that matter).

import json

import requests

import sys

import time

def retry (url, retries=10, backoff=1.8, pause=20, max_pause=900):

print (url)

while retries > 0:

try:

result = requests.get (url, headers={

"User-Agent" : "placeholder"

}, timeout=10).json ()

if "error" not in result:

return result

else:

print (result)

except:

pass

retries = retries - 1

if retries > 0:

print ("error, sleeping " + str (pause) + " seconds...")

time.sleep (pause)

pause = min (max_pause, pause * backoff)

return None

def get_subreddit_posts (subreddit):

base_url = "https://www.reddit.com/r/" + subreddit \

+ "/new.json?sort=new&raw_json=1&limit=100"

last_id = None

rows = []

while base_url is not None:

url = base_url + "&after=" + last_id if last_id else base_url

response = retry (url)

data = response["data"]["children"]

if len (data) == 0:

base_url = None

else:

rows.extend (data)

for item in data:

new_id = item["data"]["name"]

if last_id is None or new_id < last_id:

last_id = new_id

return rows

def get_thread_detail (permalink):

base_url = "https://www.reddit.com" + permalink

if not base_url.endswith ("/"):

base_url = base_url + ""

base_url = base_url + ".json?raw_json=1"

rows = retry (base_url)

return rows

def get_subreddit_posts_and_detail (subreddit):

posts = get_subreddit_posts (subreddit)

for post in posts:

post["detail"] = get_thread_detail (post["data"]["permalink"])

return posts

def get_user_info (user):

base_url = "https://www.reddit.com/user/" + user + \

"/about.json"

result = retry (base_url)

return [] if len (result) == 0 else result["data"]

def get_subreddits_moderated (user):

base_url = "https://www.reddit.com/user/" + user + \

"/moderated_subreddits.json"

result = retry (base_url)

return [] if len (result) == 0 else result["data"]

if __name__ == "__main__":

if sys.argv[1] == "subreddit":

data = get_subreddit_posts_and_detail (sys.argv[2])

with open (sys.argv[3], "w") as file:

json.dump (data, file, indent=2)

elif sys.argv[1] == "user":

data = get_user_info (sys.argv[2])

with open (sys.argv[3], "w") as file:

json.dump (data, file, indent=2)

elif sys.argv[1] == "moderated":

data = get_subreddits_moderated (sys.argv[2])

with open (sys.argv[3], "w") as file:

json.dump (data, file, indent=2)The above Python code contains functions for downloading the following four forms of Reddit data:

a list of all recent posts in a given subreddit

a hierarchical list of comments in a given thread

profile information for a given user account

a list of subreddits moderated by a given user account

This set of functions is far from comprehensive, but is sufficient to demonstrate the concept and can easily be adapted to call additional Reddit JSON endpoints. The JSON calls are rate limited, and in most cases require a pause after roughly 100 calls; this is handled automatically by the retry function in the above code.

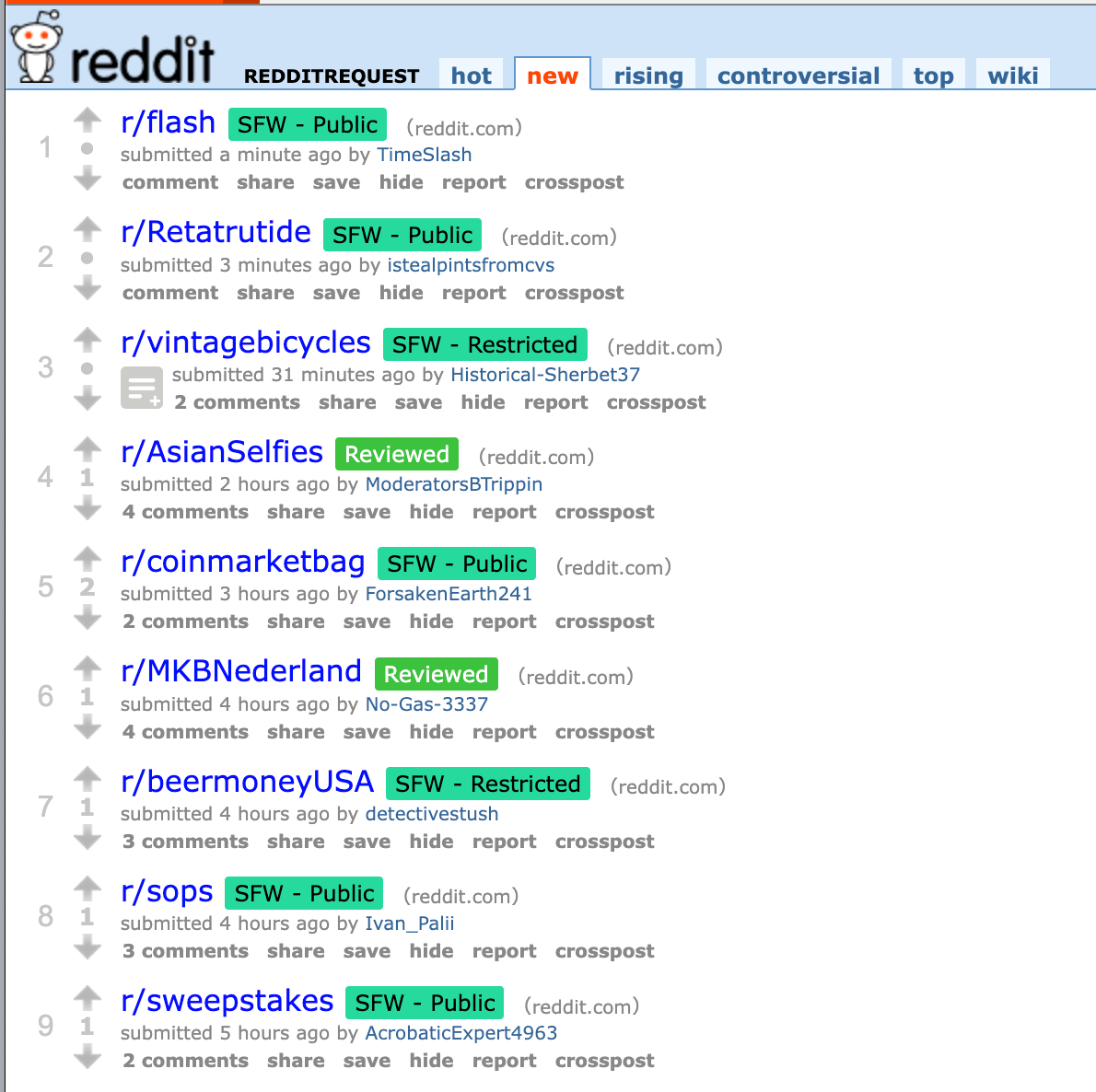

As a brief test of the above Python code, I downloaded recent posts from the /r/redditrequest subreddit and took a look at the accounts posting there. For those who are unfamiliar, /r/redditrequest is a forum where Reddit users with accounts in good standing can request control of unmoderated or banned subreddits. While this process does serve a valuable purpose, it is unsurprisingly also abused from time to time by users who are simply seeking to take control of subreddits with desirable names and have no intention of moderating them in good faith.

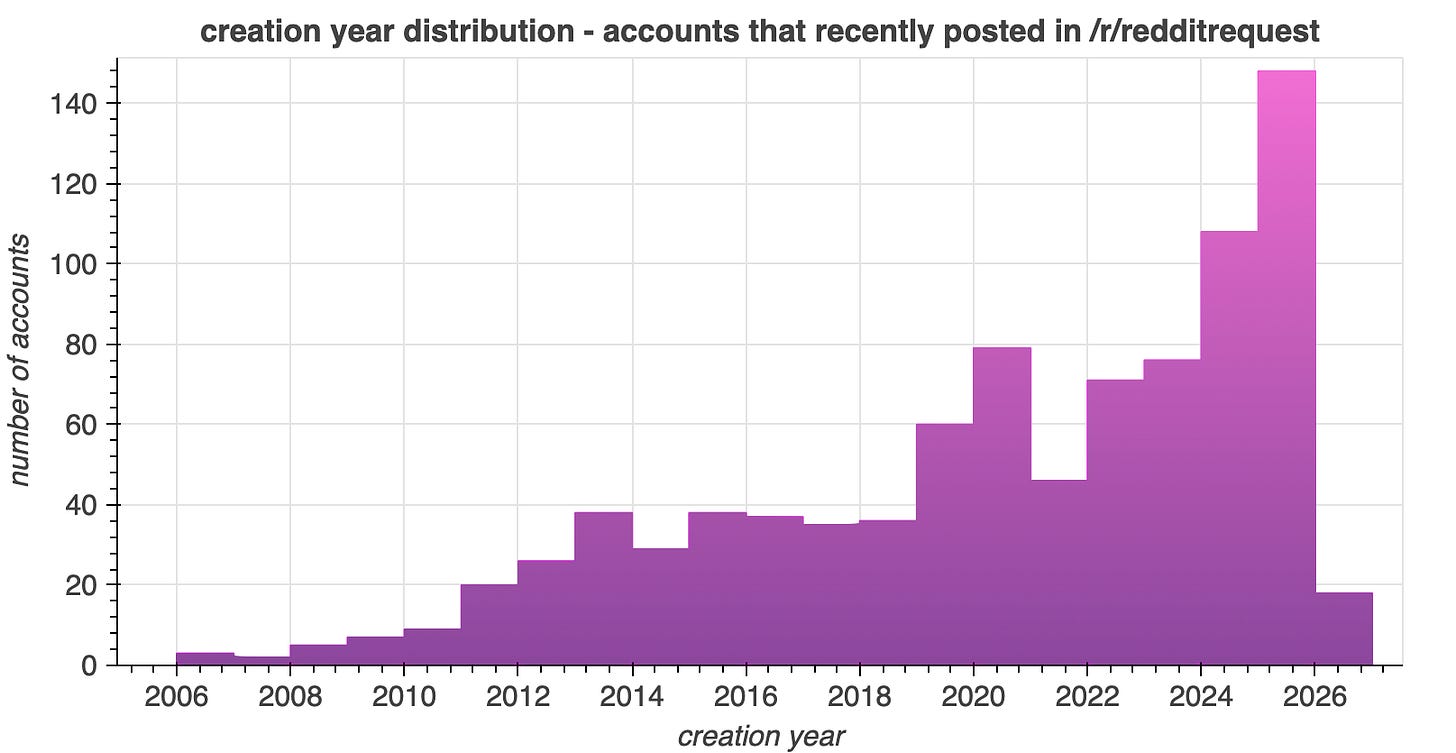

Downloading recent posts from /r/redditrequest yielded 927 posts from 895 distinct accounts, four of which were suspended before the download process completed. The accounts posting in /r/redditrequest are disproportionately recent creations, with more accounts created in 2025 than in any prior year. This pattern is somewhat more interesting for accounts expressing interest in moderation than it would be for Reddit users as a whole, as the moderators of active subreddits are generally experienced users who have been on the platform for years.

Among the accounts posting to /r/redditrequest are multiple accounts created within the last six months that appear to be attempting to take over as many subreddits as possible. Accounts engaged in this activity generally focus on a specific theme; the above collage shows examples of accounts of this type that are aggressively acquiring Indian celebrity and NSFW subreddits.

This article is intended as a basic introduction to harvesting and examining Reddit data via the Reddit JSON interface. There is substantially more to study in deeper detail, both regarding /r/redditrequest and Reddit data in general, and future articles here will delve further into such topics.