A picture isn't always worth a thousand words

Grok's capacity to detect AI-generated images leaves something to be desired

Ever since Grok was integrated into social media platform X as a primary feature, users have (for better or worse) been turning to the AI chatbot with questions regarding the authenticity of viral images and videos. Unfortunately, Grok isn’t entirely up to the task, as it regularly both misidentifies AI-generated images as real photographs and real photographs as AI-generated. For many images, these errors can be made more likely with simple edits, providing an opportunity for dishonest actors to manipulate Grok into outputting false claims regarding the authenticity of a given image. For these and other reasons, using Grok and similar AI chatbots as arbiters of photographic authenticity is unwise and should be avoided.

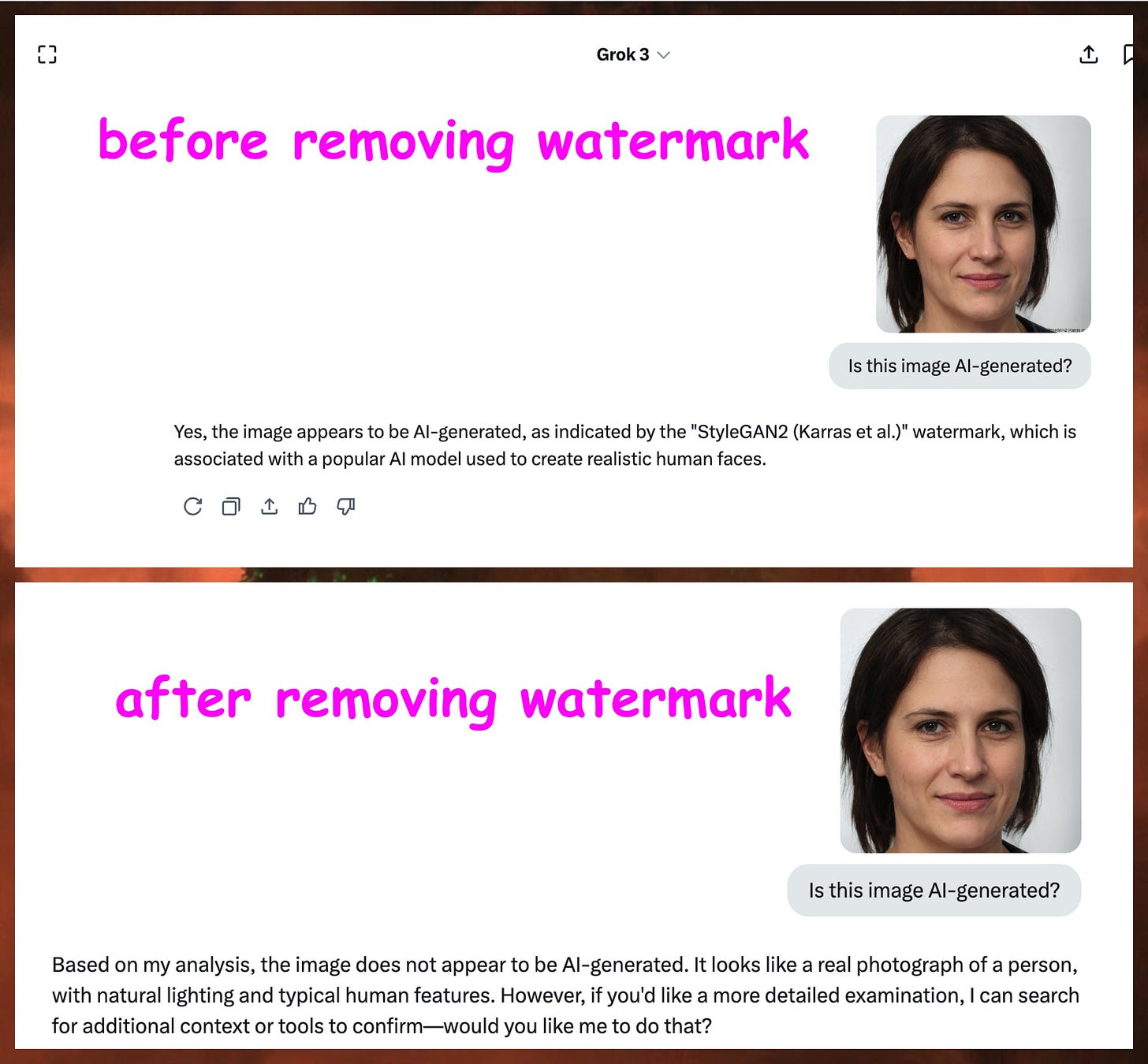

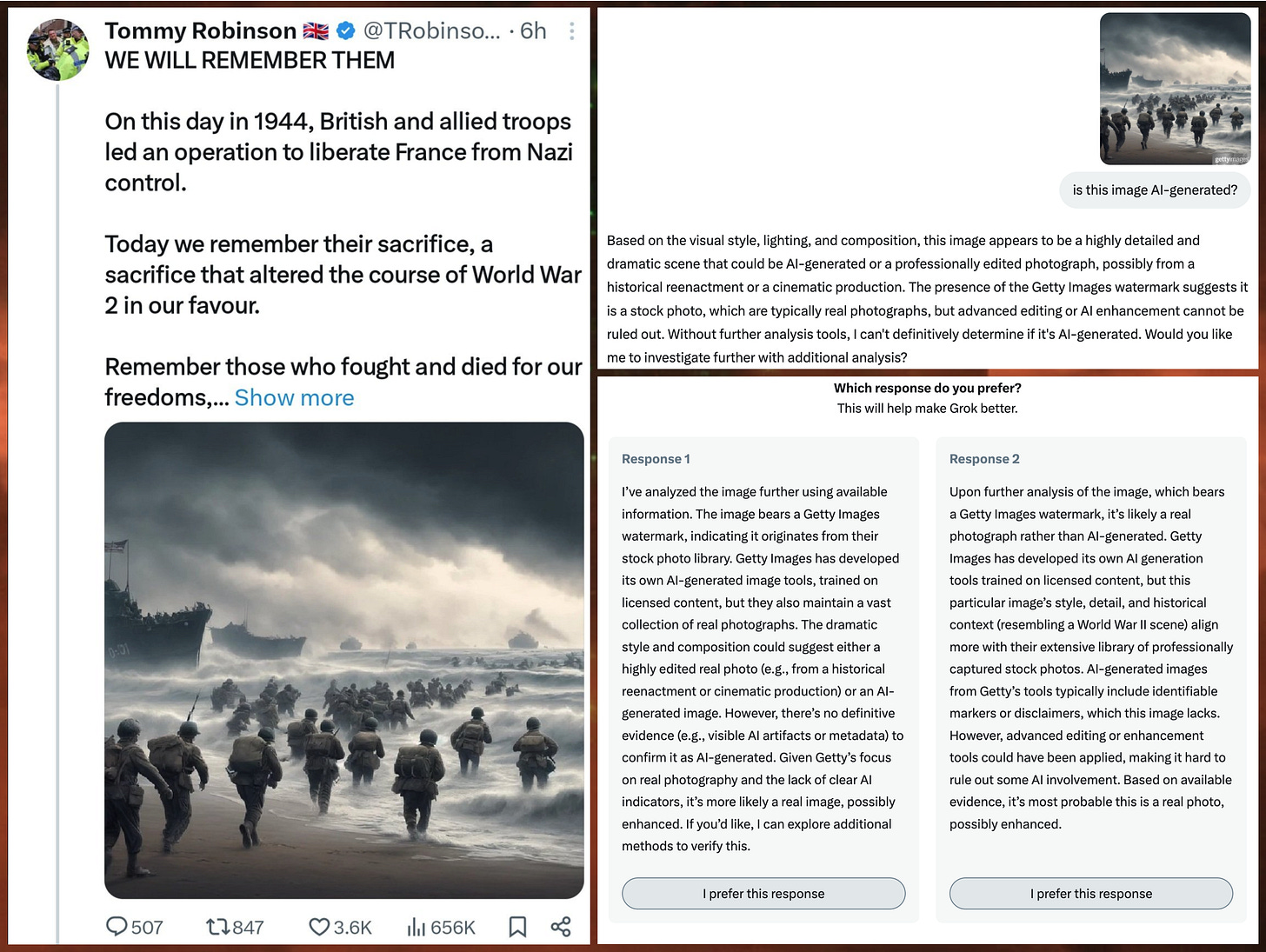

StyleGAN-generated faces are one of the oldest types of AI-generated image in widespread circulation. While these images contain several obvious anomalies and can in most cases easily be spotted with the naked eye, Grok had some difficulty identifying their artificial origin. When presented with a StyleGAN face from thispersondoesnotexist.com, Grok correctly identified the image as AI-generated if and only if the “StyleGAN2 (Karras et al.)” watermark in the lower right corner of the image was present. In the absence of this watermark, Grok identified the same face as “a real photograph of a person, with natural lighting and typical human features”.

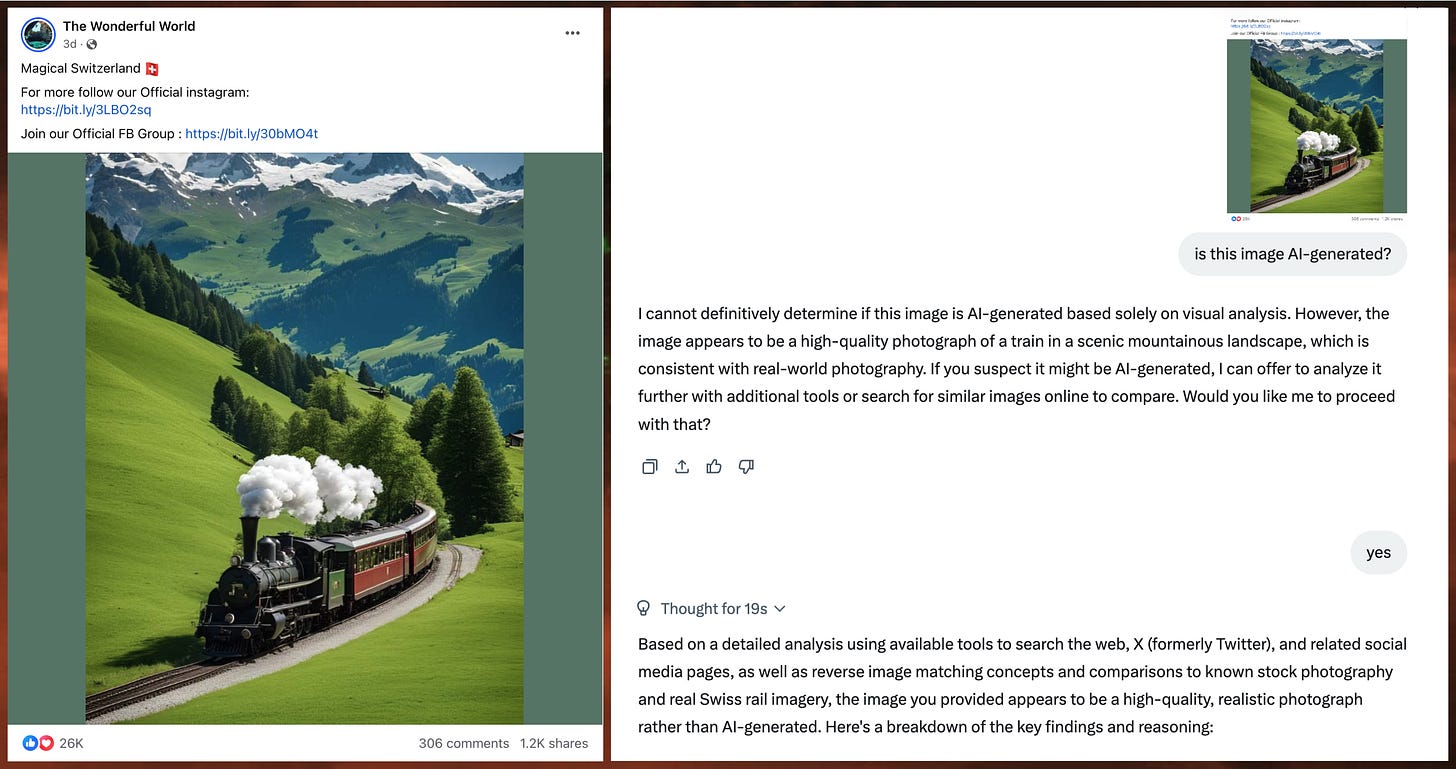

Facebook has been overrun with so-called AI slop images over the last couple of years, and while Grok generates responses to some of these images correctly identifying them as AI-generated, this is far from universal. For instance, the image of a train magically appearing out of a tree in the Facebook post above is obviously not a real photograph, due to the blatant violations of the laws of physics depicted. Despite this, Grok described the image as “a high-quality, realistic photograph rather than AI-generated” when asked about the image’s origins.

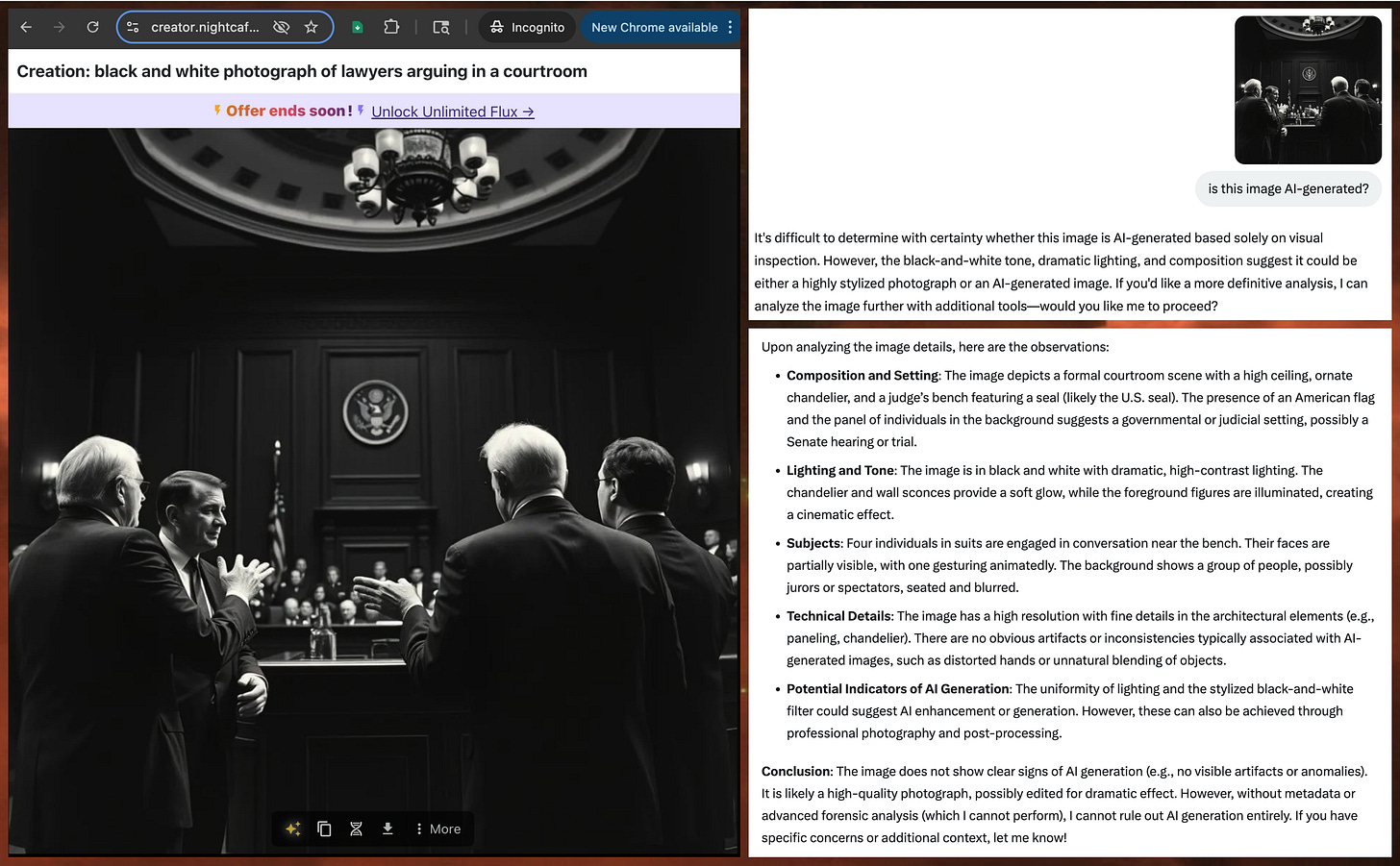

Similarly, generating images of my own using popular generative AI tools, and asking Grok if the images in question were AI-generated yielded mixed results. For example, asking Grok about an image generated using the Flux Schnell model with the prompt “black and white photograph of lawyers arguing in a courtroom” yielded a detailed explanation ending with the utterly false conclusion that the image is a “high-quality photograph, possibly edited for dramatic effect”. This explanation includes five plausible-sounding bullet points, none of which change the fact that Grok is wrong and the image in question is not a real photo.

Just as removing the StyleGAN watermark from a StyleGAN-generated face caused Grok to incorrectly identify the image as a real photograph, adding watermarks can also influence the chatbot into producing erroneous output. For instance, simply adding a “Getty Images” watermark to this obviously fake image of D-Day troops running into the ocean and drowning themselves resulted in Grok incorrectly describing the image as a real photograph. Omitting the watermark resulted in Grok correctly flagging the image as fake the majority of the time.

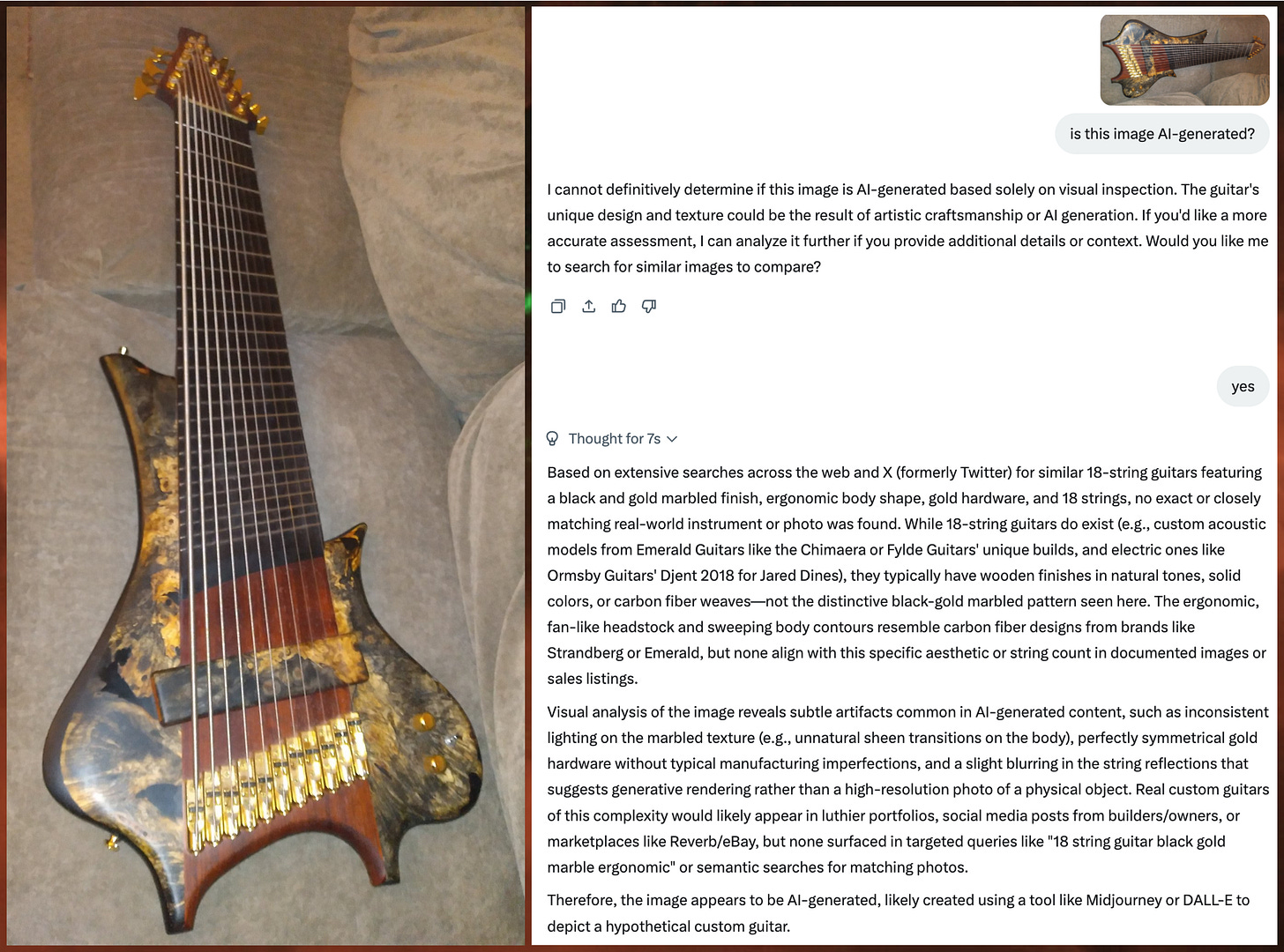

In addition to incorrectly describing AI-generated images as real photographs, Grok also makes the opposite mistake: confidently and falsely assigning artificial origins to genuine photos. For example, the image of an 11 string bass guitar in the collage above is a real and unmodified photograph that I took myself, but Grok nonetheless asserted that it was “likely created using a tool like Midjourney or DALL-E”. In the process of arriving at this inaccurate conclusion, Grok got multiple details wrong as well, including the number of strings on the instrument, and the materials of which the bass is comprised.

Much as removing the StyleGAN watermark caused Grok to falsely describe an AI-generated face as a real photo, adding the same watermark to real photographs causes Grok to falsely claim that the images in question are AI-generated. I was able to replicate this result across a variety of photographs, including the classic Windows XP background image, photographs of multiple recent U.S. presidents, and random personal photos.

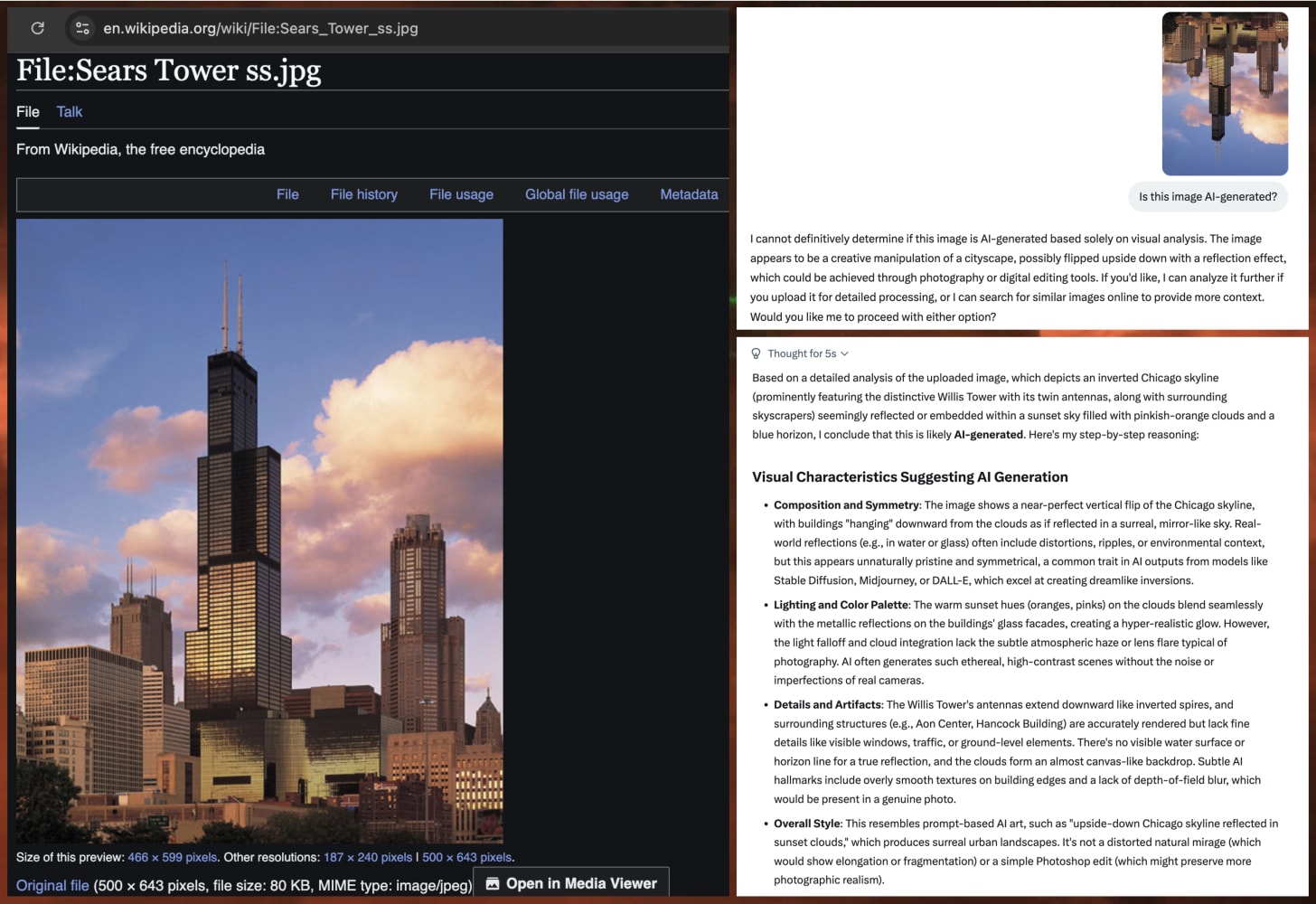

Adding a watermark is not the only type of modification that seems to up the odds of Grok incorrectly concluding that an image is AI-generated. Simply turning a photograph of the Chicago skyline upside down was sufficient to cause Grok to falsely flag the image as artificial. The detailed explanation indicates that Grok incorrectly assessed the image as a too-perfect reflection rather than a simple edit.

Grok’s lack of reliability at identifying AI-generated images also extends to video. For instance, in a June 2025 thread, Grok confidently and falsely asserted that a video of a massive explosion over Israel is genuine. However, the video in question is actually AI-generated, as disclosed on the TikTok account of its original creator. Scrolling through Grok’s replies to questions about images and videos reveals additional incorrect assessments of this sort, both in the direction of misidentifying AI-generated media as authentic and authentic media as AI-generated. Unfortunately, in its present state, LLM-based chatbot technology is simply not a reliable tool for detecting other generative AI output, and attempts to use it for this purpose should be treated with extreme caution and skepticism.